Small investors are piling into this ETF at a record pace. What the frenzy is all about

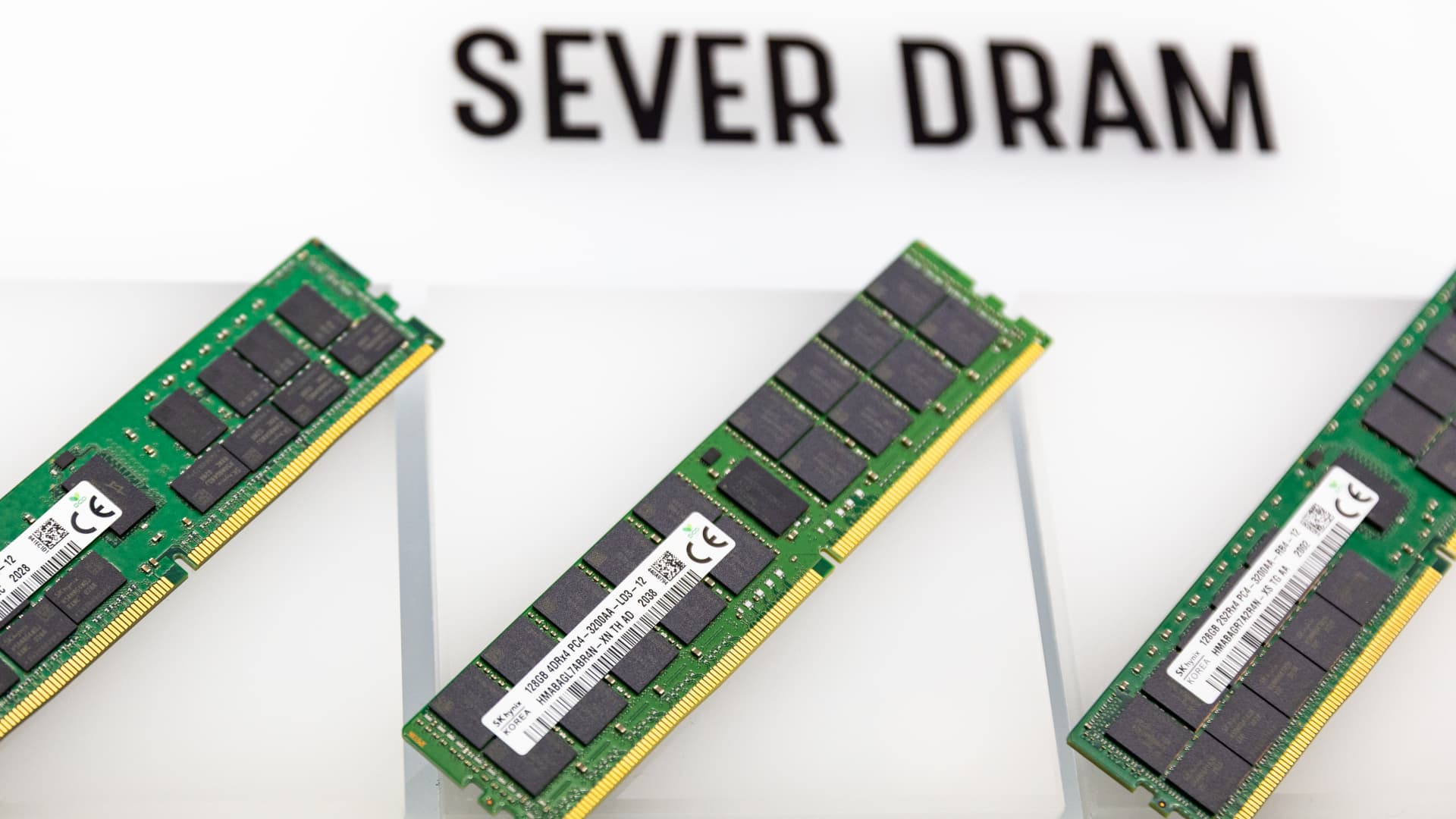

Memory chip ETF DRAM is suddenly the “poster child” for the current boom in semiconductor stocks among retail investors, analysts say. Retail purchases of DRAM topped $200 million a day in less than four weeks, beating the dollar-volume pace of similar retail-favorite ETFs like the Tesla-leveraged TSLL and the Bitcoin-based BITO , according to Vanda Research. “The magnitude and speed of flows suggest retail [investors] are increasingly using the ETF as a vehicle to express bullish views on the memory and broader AI infrastructure trade. For now, there are no signs of this buying frenzy slowing down,” Vanda researchers said in a note on Monday. DRAM BITO mountain 2026-04-01 Roundhill Memory ETF vs ProShares Bitcoin ETF since April 1 DRAM was launched by ETF assembler Roundhill Investments on April 2 as a way to gain exposure to makers of memory chips, including high-bandwidth memory and solid-state storage devices. Memory has emerged as a key pressure point in supply chains in the current artificial intelligence buildout, with hyperscalers worrying about shortages and soaring input prices as they race to boost capacity for their increasingly in-demand AI workloads. Soaring demand has ramped up pricing power for the memory makers and is boosting profit margins, with multiple suppliers projecting margins in excess of 70% for 2026. The types of memory most needed for AI processing include the eponymous DRAM and the slightly slower NAND flash memories. The three largest holdings in the $6-billion DRAM ETF account for some three quarter of all assets: Samsung Electronics at 25%, SK Hynix 24% and Micron Technology at 24%. Together, the three receive 95% of all DRAM revenues and 67% of all NAND revenues, according to Omdia research published by the Semiconductor Industry Association . Other chipmakers in the ETF include Kioxia Holdings, SanDisk Corp , Western Digital and Seagate Technology – all at about 5%. Commercial adoption of “agentic AI” – pieces of software that can make their own optimization decisions and workflow adjustments within different business processes – has amped demand for central processing units (CPUs), which use a lot of DRAM and NAND to process data. CPUs over GPUs Industry experts say this preference for CPUs over graphics processing units is due to a change in system architecture that favors a newer type of AI. “Agentic AI workloads are … shifting performance bottlenecks from GPU-centric inference to CPU-heavy orchestration and workflow management,” Daniel Nenni wrote for SemiWiki in April. “Emerging agentic AI systems transform inference into a distributed, multi-step process … This architectural change introduces substantial CPU demand.” Morgan Stanley analyst Shawn Kim in a report on Monday raised his estimate for the CPU total addressable market by 25% by 2030. “The AI system in the future will look like a distributed system consisting of GPU racks for dense model compute, fast networking and a software stack that can keep it all observable, secure and efficient; [and] agentic CPU racks for orchestration, processing data and tool execution,” he wrote.